In this blog post What GPT‑5.3 Instant Signals for the Future of Enterprise AI we will explore what this model release suggests about where enterprise AI architecture is heading, and what I think technology leaders should start designing for now.

I’ve been building and reviewing enterprise platforms for a long time, and one pattern I keep running into is this: the most important AI changes aren’t the flashy demos. They’re the subtle shifts in how models behave under real organisational pressure.

What GPT‑5.3 Instant Signals for the Future of Enterprise AI is my attempt to translate that subtle shift into architecture decisions. GPT‑5.3 Instant looks like “just” a smoother, more reliable everyday model. In my experience, that kind of change is usually the early warning signal that the platform layer is moving under our feet.

High-level takeaway

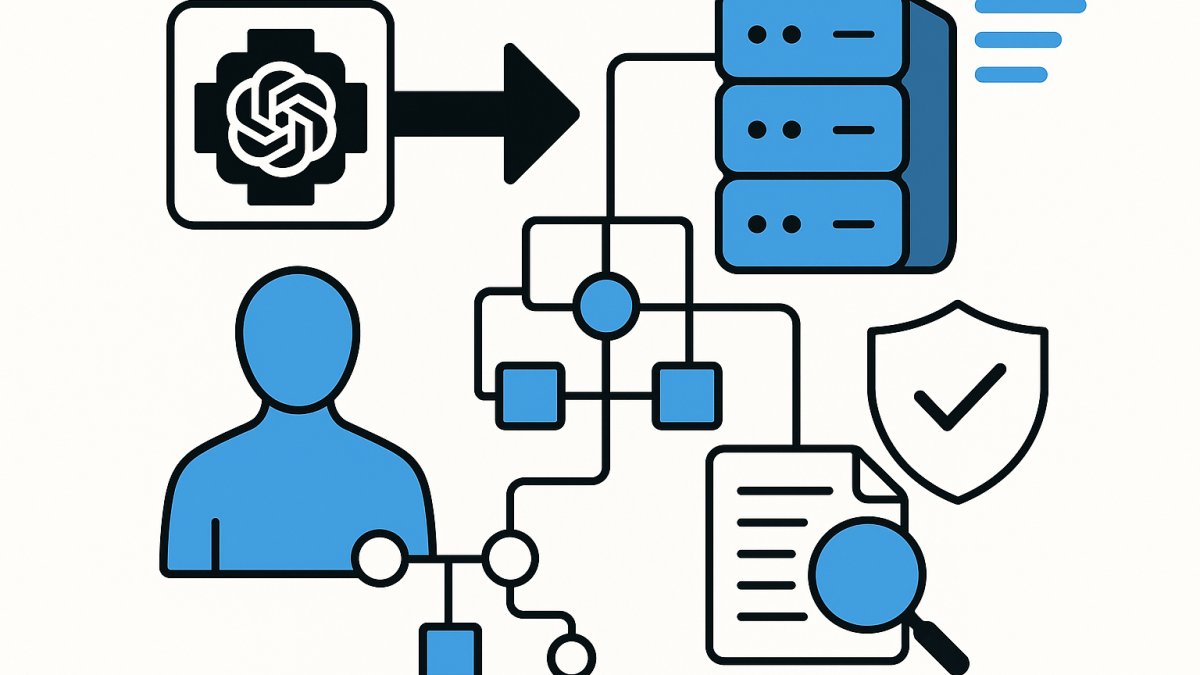

Here’s my headline: GPT‑5.3 Instant reinforces that the future enterprise AI stack won’t be “one model in one app.” It will be a routed system of models, tools, policies, and context that behaves consistently across many teams and many use cases.

In other words, architecture is coming back to the centre. Not because we love diagrams, but because without architecture, AI sprawl becomes a business risk.

What “Instant” really means in an enterprise context

“Instant” is often framed as latency and responsiveness. That matters, but I think the bigger story is: the model is being tuned for everyday usefulness under imperfect prompts, ambiguous questions, and time pressure.

In real organisations, that’s the default state. People don’t write perfect prompts. They ask half-questions in the middle of meetings, they paste messy emails, they mix policy and emotion, and they expect the system to still behave safely and helpfully.

The core technology behind it (in plain language)

At the platform level, GPT‑5.3 Instant signals a few technical directions that are becoming standard for “enterprise-grade” AI:

- Instruction tuning and preference tuning at scale so the model follows intent better and wastes less time on defensive boilerplate.

- Adaptive reasoning where the system can spend more effort on harder problems and less on easy ones, without you explicitly selecting a “slow thinking” mode every time.

- Retrieval and web-assisted answering patterns that improve relevance when the model must incorporate external, time-sensitive information.

- Safety policy execution that feels less like a brick wall and more like a guided boundary—still safe, but less disruptive to legitimate work.

None of those are “magic.” But combined, they push us toward a new architectural reality: the model is only one component in a broader orchestration and governance system.

Five architecture signals I take from GPT‑5.3 Instant

1) Model choice becomes a routing problem, not a procurement decision

For years, many organisations treated models like products: pick one, standardise, roll out. What I’m seeing now is closer to what we did with compute tiers and storage classes in cloud: we route work to the best fit.

Fast “Instant” interactions for everyday questions. Deeper reasoning models for complex analysis. Specialist code agents for development workflows. The architecture question becomes: how do we route predictably and auditably?

Practical step: define a simple routing taxonomy like:

- Tier 1 low-risk, low-cost, high-volume (everyday assistance)

- Tier 2 higher reasoning and higher cost (analysis, architecture, planning)

- Tier 3 restricted workloads (regulated data, sensitive decisions, security)

2) Safety is moving from “refuse” to “steer”

I’ve worked with organisations where the biggest AI complaint wasn’t hallucinations. It was the user experience of safety: the system refusing reasonable requests, or burying the answer under disclaimers.

GPT‑5.3 Instant’s shift toward fewer unnecessary refusals is a sign that safety is being implemented more as graded guidance than binary refusal. Architecturally, that means we need to treat safety as a policy engine, not a static checklist.

In Australia, this intersects with real governance expectations: Essential Eight maturity uplift, ASD/ACSC-aligned controls, and privacy obligations that require you to be intentional about data handling and access. The model’s safety behaviour won’t replace that; it will amplify whatever you’ve designed.

Practical step: design for multiple safety layers:

- Pre-processing classification (data sensitivity, user role, context)

- In-processing tool permissions and constrained actions (what the AI can do)

- Post-processing output checks (leakage, policy violations, citations where required internally)

3) “Better answers with the web” is a preview of enterprise retrieval done right

When a model gets better at mixing external sources with reasoning, it reminds me of a lesson from enterprise search: retrieval quality isn’t just “did it find documents?” It’s “did it assemble the right answer for this decision?”

In enterprise architecture terms, this is the difference between:

- RAG as a feature (bolt on a vector database)

- RAG as a product capability (document pipelines, freshness SLAs, ranking, access control trimming, evaluation)

Practical step: treat knowledge as a pipeline with operational ownership:

- What is the source of truth?

- How quickly does new policy/technical guidance become searchable?

- How do we prevent “stale-but-confident” answers?

- How do we enforce permissions so retrieval respects Microsoft 365 / SharePoint / Purview boundaries?

4) The “tone” improvements are actually a governance requirement

This one surprises people: tone sounds like branding, but it’s also risk. A model that overstates certainty, moralises, or speaks inconsistently creates operational drag. It triggers escalations, rework, and distrust.

In my experience, enterprise adoption is often won or lost on these “soft” edges. That’s why I view tone and conversational flow as part of the interface contract between employees and systems.

Practical step: define an organisational “AI interaction style guide” the same way you’d define an API style guide. Not for marketing—so outcomes are consistent across teams.

5) The real platform is tool-use plus permissioning

As soon as a model becomes reliably useful, the next demand is predictable: “Can it do something, not just say something?” That’s where tool integration lands—ticketing, email drafts, runbooks, reporting, code changes, and security workflows.

This is where enterprise AI architecture gets serious. The model should not have broad, ambient power. It should have explicit tools with scoped permissions, strong logging, and human checkpoints for high-risk actions.

Practical step: implement a tool gateway that enforces:

- allowlisted actions per role

- data minimisation (only pass what the tool needs)

- full audit trails (who asked, what context, what actions were attempted)

- approval workflows for sensitive operations

A real-world scenario I’ve seen (anonymised)

A large organisation rolled out an internal AI assistant to help with operational queries: policy questions, incident response steps, and change management guidance. The model was strong, but the implementation was fragile.

The assistant was connected to a document store without strong freshness controls. When policies changed, old documents still ranked highly. The AI answered confidently from outdated guidance, and engineers followed it because it sounded authoritative.

The fix was not “use a smarter model.” The fix was architectural:

- document ingestion with versioning and deprecation rules

- retrieval that preferred “current policy” sources

- access trimming tied to identity

- an evaluation harness that repeatedly tested high-stakes questions

Once those pieces were in place, a faster, smoother model experience mattered more—because the platform underneath was trustworthy. That’s the lens I use when I look at GPT‑5.3 Instant: it raises the ceiling, but it also raises expectations on the architecture.

What I expect the next 12–24 months of enterprise AI architecture to look like

Based on what I’m seeing across Azure, Microsoft 365 ecosystems, and multi-model AI stacks, I expect these to become normal:

- AI gateways as standard platform components (routing, policy, logging, cost controls).

- Evaluation as an operational practice, not a one-off project (quality, safety, and regression testing).

- Context engineering becoming a real discipline (what context is used, how it’s retrieved, and how it’s governed).

- Security architecture patterns that align AI tools with Essential Eight uplift, identity controls, and auditability.

- Multi-agent and specialist agent workflows where “Instant” handles conversational front doors and other agents execute constrained tasks behind the scenes.

Closing reflection

As a published author and an enterprise architect based in Melbourne, I’m biased toward systems thinking. GPT‑5.3 Instant reinforces my belief that enterprise AI success won’t come from picking the “best model.” It will come from building a platform that makes good AI behaviour repeatable across the organisation.

The question I’m sitting with is this: when AI becomes everyone’s daily interface to systems and knowledge, are we designing our architecture so it earns trust by default—or are we hoping trust will emerge on its own?

- Anthropic’s DoD stance just changed what “safe” enterprise AI means

- OpenAI’s $110B Raise and What It Changes in Enterprise AI Roadmaps

- Getting Started with Azure OpenAI

- About

- Getting Started with Containers in Google Cloud Platform