In this blog post OpenAI Hosted on Azure What Microsoft Really Means for Enterprises we will unpack what “hosted on Azure” really means in practical, architectural terms—and why the nuance matters for risk, governance, and platform decisions.

When I hear “OpenAI is hosted on Azure,” I don’t translate it as a marketing line. I translate it as a set of concrete implications about where model workloads run, how traffic enters the service, what’s inside (and outside) your tenant boundary, and who controls the operational knobs.

In OpenAI Hosted on Azure What Microsoft Really Means for Enterprises, I’ll explain the concept at a high level first, then we’ll go one layer deeper into the technology and the real-world trade-offs I’ve seen across Azure, Microsoft 365, and enterprise security programs in Australia.

High-level first What “hosted on Azure” is trying to convey

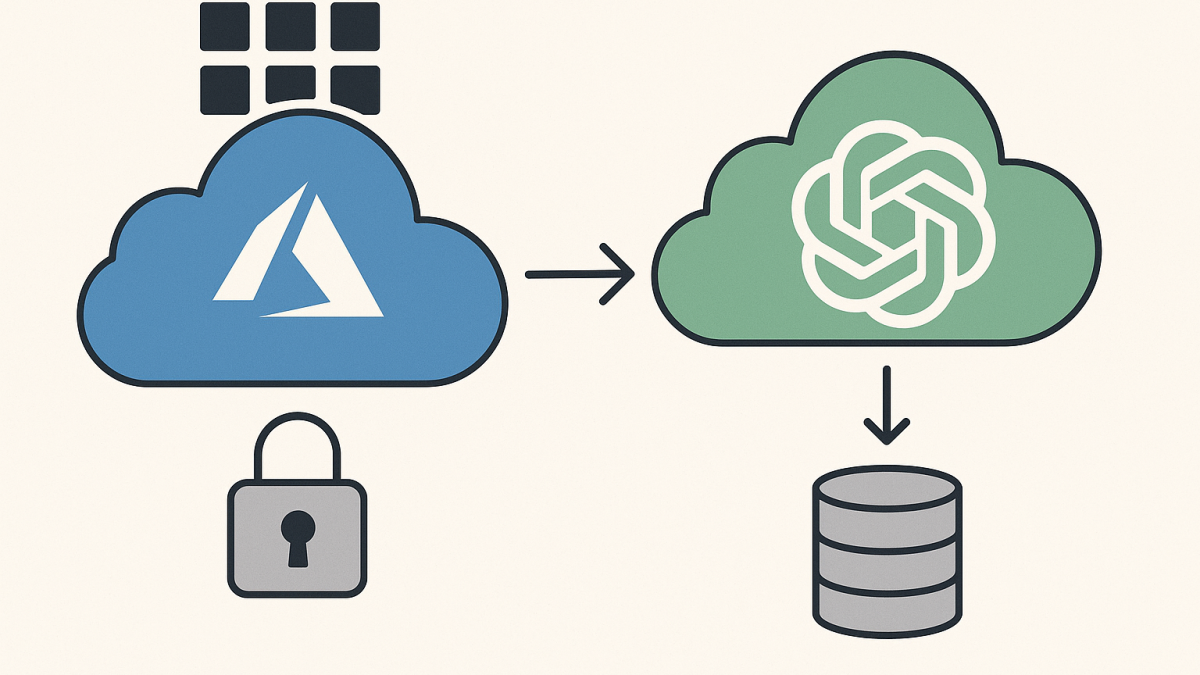

At a boardroom level, “hosted on Azure” is shorthand for the compute and storage that runs the models is provisioned and operated on Microsoft’s cloud infrastructure, rather than on OpenAI-owned data centres or another hyperscaler.

But that phrase hides a lot of detail. In practice, it can refer to multiple things at once: OpenAI’s consumer products, OpenAI’s partner-delivered APIs, and Microsoft’s own enterprise productisation of OpenAI models through Azure services.

So the right question isn’t “Is it on Azure?” The right question is: which OpenAI capability, under what commercial terms, through which endpoint, and with what data handling controls?

The core technology behind it Control planes, data planes, and stateless model inference

To make sense of “hosted on Azure,” it helps to split the world into two planes that every enterprise architect eventually runs into.

1) The control plane

The control plane is where you authenticate, configure resources, set policy, and view operational telemetry. In Azure terms, this includes Azure Resource Manager, role-based access control, network configuration, key management, and the administrative experience.

When you use Azure OpenAI, the control plane is clearly Microsoft’s: you deploy models into an Azure resource, you apply Azure-native controls, and you integrate with the identity and governance patterns you already use for the rest of your platform.

2) The data plane

The data plane is the actual runtime path: prompts go in, tokens come out. This is the inference pipeline—front doors, gateways, safety filters, model servers, and the systems that log and monitor service health.

This is where “hosted on Azure” becomes meaningful. The inference fleet (the GPU-heavy part) can be physically and operationally on Azure infrastructure, while still being a service you consume via OpenAI-branded endpoints or Microsoft-branded endpoints, depending on the offer.

3) Stateless inference is a feature, but your application is never stateless

One technical misunderstanding I keep seeing: leaders hear “the model is stateless” and assume “our data doesn’t persist anywhere.” In reality, stateless usually means the model doesn’t permanently learn from your prompts in the moment like a human would.

Your application is the stateful part. If you store chat history, citations, embeddings, documents, and traces, then you’ve built a stateful system—whether the model is stateless or not.

Three different meanings of OpenAI hosted on Azure

Over the last couple of years, the phrase “hosted on Azure” has been used to describe different slices of the ecosystem. Here’s the cleanest way I’ve found to explain it to CIOs and CTOs without hand-waving.

Meaning 1 OpenAI first-party products run on Azure infrastructure

This is the “ChatGPT-like” world: OpenAI’s own products and experiences. When Microsoft says OpenAI’s first-party products are hosted on Azure, it’s describing the infrastructure layer powering those offerings.

Enterprise implication: this does not automatically mean your organisation gets Azure enterprise controls when staff use a consumer product. Infrastructure location and enterprise governance are two different questions.

Meaning 2 Partner or third-party integrations may still be served from Azure

Microsoft has also been explicit that certain API calls to OpenAI models resulting from collaborations with third parties can be hosted on Azure. The key word is “stateless”: it describes an inference-style call where you send a prompt and get a response, not a long-lived, tenant-scoped deployment you manage.

Enterprise implication: even if the underlying GPUs are on Azure, the commercial boundary (who you contract with, what terms apply, what logs exist, what support model exists) may not be the same as Azure OpenAI.

Meaning 3 Azure OpenAI is Microsoft’s enterprise wrapper around OpenAI models

This is the version most relevant to enterprise architecture. Azure OpenAI is a Microsoft Azure service that exposes OpenAI models (and sometimes adjacent capabilities) through Azure-native constructs: regions, resources, identity integration, private networking options, and Azure policy patterns.

Enterprise implication: this is where “hosted on Azure” can translate into concrete governance conversations—especially around data residency expectations, abuse monitoring, logging, and network isolation.

What it means for data security and privacy in practice

In Australia, these conversations usually land quickly on ACSC-aligned security controls, Essential Eight maturity discussions, and privacy obligations. “Hosted on Azure” can help, but only if you connect it to the controls that actually reduce risk.

1 Identity and access control becomes familiar again

When you can anchor access to Entra ID, managed identities, and well-understood role assignments, you avoid the sprawl of API keys living in CI/CD variables and developer laptops.

In my experience, this is one of the fastest wins for reducing accidental exposure. It’s not glamorous, but it is consistently where incidents start.

2 Networking options matter more than the model brand

If your AI traffic traverses the public internet, you have to compensate with other controls. If you can keep traffic on private paths (for example, private endpoints and controlled egress), you reduce your exposure and simplify detective controls.

I’ve seen teams spend months debating model choice and then deploy it with wide-open networking because “we’ll lock it down later.” With AI, “later” tends to arrive after someone pastes sensitive content into a prompt.

3 Logging and monitoring is the hidden trade-off

Enterprises want two things that often conflict: minimal data retention and strong observability. Most AI services have some form of monitoring and abuse detection, and you need to understand what gets logged, for how long, and under what conditions humans may review flagged content.

From a governance standpoint, I treat this like any other security telemetry conversation: decide what you need to detect misuse, then minimise everything else, and document the decision.

4 Data residency is not a single checkbox

“Hosted on Azure” is sometimes interpreted as “data stays in Australia.” The reality is more nuanced. You can often choose a region for your resource, but different operations (especially advanced workflows like fine-tuning, evaluation, or certain safety services) may have different data flows.

My practical advice is to model this explicitly. Write down where prompts originate, where they transit, where they are processed, what is stored, and what is optional. That document becomes the basis for your security review and your privacy impact assessment.

What it means for reliability and capacity planning

When workloads are “hosted on Azure,” leaders often assume enterprise-grade reliability by default. Azure reliability engineering is strong, but AI has some unique capacity dynamics.

- Capacity is GPU-bound: you can’t always burst like you would with CPU-based web apps.

- Throughput and latency are workload-shaped: long prompts and long responses change the performance profile dramatically.

- Rate limits are part of the design: treat them as architectural constraints, not inconveniences.

I’ve helped teams avoid painful rework by putting simple guardrails in place early: token budgets, response length caps, circuit breakers, and user experience fallbacks when the model is slow or throttled.

A simple checklist for interpreting hosted on Azure as a tech leader

When someone says “OpenAI is hosted on Azure,” I ask a few clarifying questions before I let the conversation move on.

- Which endpoint are we using? Azure OpenAI, OpenAI direct, or a third-party product embedding OpenAI?

- What is the identity model? Entra ID, API keys, or something else?

- What networking controls exist? Public internet, private endpoints, controlled egress?

- What is logged, and for how long? Prompts, outputs, metadata, flagged content?

- What’s our data residency expectation? Region selection, cross-region processing, and any exceptions?

- Where does state live? Chat history, vector stores, document repositories, traces?

If you can answer those six, you’ve turned a vague statement into an architecture you can govern.

A forward-looking takeaway

My sense is we’ll hear “hosted on Azure” more often, not less—especially as models are embedded into every product category and partnerships keep shifting. The phrase will remain true at an infrastructure level, while becoming less useful as a proxy for governance.

The question I’m sitting with is this: as AI becomes a standard enterprise capability like identity or email, do we need a clearer industry vocabulary than “hosted on” to describe control, accountability, and data boundaries?

- Getting Started with Containers in Azure

- Getting Started with Azure OpenAI

- Getting Started with Containers in Azure – (Second Edition)

- OpenAI’s $110B Raise and What It Changes in Enterprise AI Roadmaps

- OpenAI Agents SDK vs LangGraph in 2026 What CIOs should standardise on