In this blog post OpenAI’s $110B Raise and What It Changes in Enterprise AI Roadmaps we will unpack what a raise of this scale really signals for enterprise leaders, and how I’d adjust an AI roadmap to reduce lock-in, improve governance, and accelerate value safely.

The title of this post, OpenAI’s $110B Raise and What It Changes in Enterprise AI Roadmaps, sounds like market noise. In practice, funding at this magnitude changes the “shape of the road” for enterprise AI: who controls the platform, where compute is likely to concentrate, and what capabilities will become commoditised versus differentiated.

From Melbourne, working across Australian and international organisations, I’ve learned that most AI roadmaps fail for boring reasons. Data access, identity, privacy, and operating model problems show up long before the model quality becomes the blocker.

So I’m not reading this raise as “OpenAI will build cooler models.” I’m reading it as a signal about infrastructure scale, ecosystem gravity, and competitive pressure that will flow into our architecture choices.

High-level meaning for CIOs and CTOs

In my experience, when a platform provider raises at this scale, three things follow. First, the pace of product change accelerates. Second, enterprise leverage decreases unless you deliberately design for options. Third, every other vendor responds with price moves, packaging changes, and “AI-first” roadmaps that can distort priorities.

If you lead technology strategy, the question isn’t “Do we bet on OpenAI?” The question is “How do we build an enterprise AI capability that can survive the next three years of rapid platform shifts?”

The core technology behind the shift (explained without the hype)

At the heart of all of this is a simple reality: modern AI is a compute-and-data supply chain. Large language models are trained on massive datasets using enormous clusters of specialised hardware, then served to users through APIs that run inference (the model generating answers) at scale.

When you see a $110B raise, you’re seeing a bet on that supply chain. Not just research, but datacentres, GPUs and accelerators, networking, energy, and the software platforms that turn raw model capability into usable enterprise features like identity integration, audit logs, data controls, and compliance tooling.

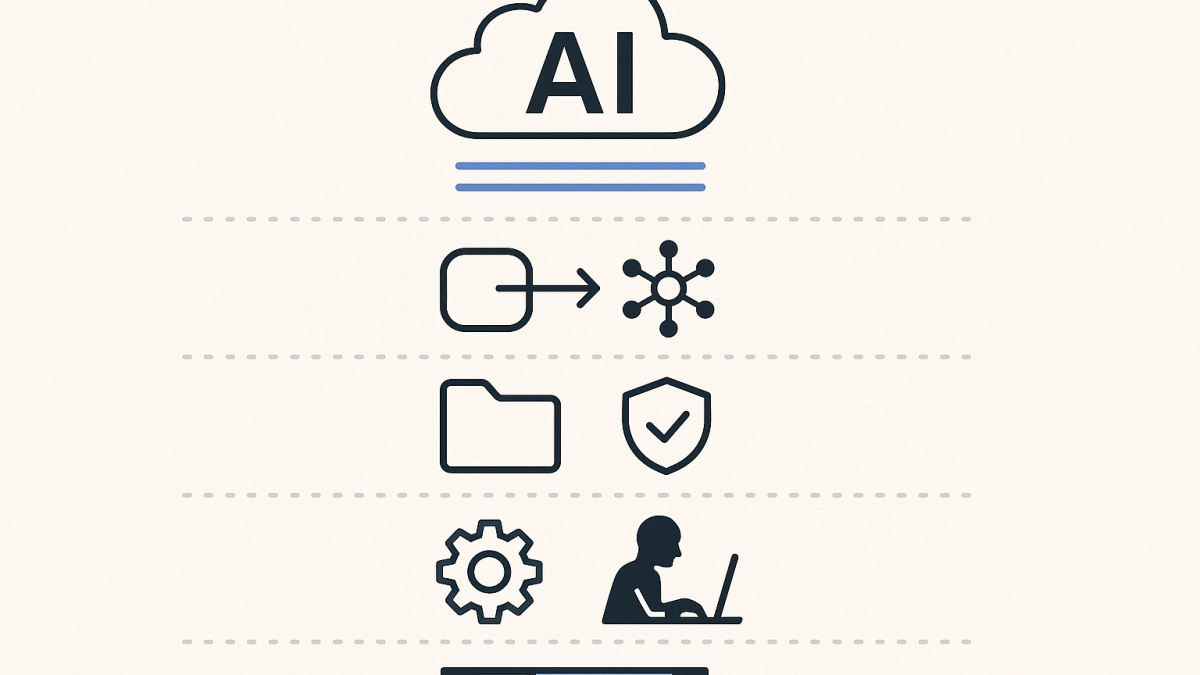

For most organisations, the “enterprise AI platform” becomes a layered architecture:

- Model layer: one or more foundation models accessed via API (OpenAI, Anthropic, open-weight models hosted privately, etc.).

- Orchestration layer: prompt routing, tool calling, evaluation, caching, and policy enforcement.

- Knowledge layer: retrieval augmented generation (RAG), where the model grounds answers in your internal documents and systems.

- Security and governance layer: identity, logging, data loss prevention, classification, and approvals.

- Product layer: copilots inside business workflows (service desk, sales, finance, engineering, HR).

The raise matters because it increases the probability that the model layer gets cheaper, faster, and more capable, while the orchestration and governance layers become where enterprises either win or lose control.

What I’d change in an enterprise AI roadmap right now

1) Treat “model choice” as a policy decision, not a project decision

One pattern I keep running into is teams selecting a model provider inside a single use case, then accidentally making it the enterprise default. Six months later, you have five different wrappers, inconsistent logging, and no clean way to swap providers when pricing or risk changes.

I’d formalise a model access policy early. This doesn’t need to be heavy, but it should define approved providers, data sensitivity tiers, and where models may be used (sandbox vs production, internal vs external users).

In Australia, this is where I like to align with the spirit of the ACSC Essential Eight. Not because AI is “just another endpoint,” but because maturity thinking matters: application control, patching, least privilege, MFA, and backups become very real when AI agents start touching systems.

2) Assume pricing and packaging will change frequently

Big capital tends to drive growth targets. Growth targets tend to drive packaging experiments: bundling, tiering, new SKUs, and shifting rate limits. I’ve seen organisations overfit their architecture to a specific pricing model, and that’s a quiet roadmap killer.

Practical step: design an AI consumption layer that centralises usage tracking, cost allocation, and rate limiting. If you can’t measure it, you can’t govern it. If you can’t govern it, you can’t scale it beyond a few enthusiastic teams.

3) Double down on “enterprise RAG” before you chase agents everywhere

Executives want agents. Developers want agents. Vendors want you to believe agents will run your business next quarter.

My experience is that most value still comes from making knowledge usable: policies, procedures, customer history, project docs, and operational runbooks. RAG is not glamorous, but it’s where trust is built, because you can show sources, reduce hallucinations, and control what information is in scope.

Roadmap adjustment: prioritise a single enterprise retrieval strategy (document stores, metadata, permissions, and indexing) and reuse it across use cases. Don’t rebuild search, chunking, and access control in every team.

4) Make security a first-class feature, not a gate

A funding headline can push organisations into a “move fast” posture. That’s understandable, but it’s also how sensitive data ends up in the wrong place, and how shadow AI becomes the default.

What I recommend is a security posture that feels enabling:

- Identity everywhere: tie model access to Entra ID (or your identity provider), with conditional access and MFA for privileged operations.

- Data classification: define what can be used in prompts, what requires redaction, and what must never leave controlled boundaries.

- Logging and audit: keep prompt/response logs where appropriate, with retention rules, and be explicit about who can access them.

- Threat modelling: prompt injection, data exfiltration through tool calling, and supply chain risks are not theoretical anymore.

In an Australian context, this is also where privacy expectations bite. Leaders don’t need every clause of legislation memorised, but they do need a design principle: don’t collect more than you need, don’t retain longer than necessary, and be transparent about how AI uses data.

5) Plan for a multi-cloud and multi-model reality even if you standardise

Here’s the nuance I share with peers: standardisation is good, but “single vendor” is rarely an enterprise strength. A raise this large increases the chance that one provider becomes a gravity well, and gravity wells are convenient until they aren’t.

I’d standardise on one primary platform for velocity, but keep deliberate escape hatches:

- Abstract model calls behind a small internal API.

- Keep prompts and evaluations versioned and portable.

- Separate your knowledge store from your model provider.

- Use open formats for documents and embeddings where possible.

What technical leaders can do Monday morning

- Create an AI architecture one-pager that shows the layers (model, orchestration, knowledge, security, apps) and the approved patterns.

- Define three data tiers for prompts (public, internal, restricted) and link each tier to approved controls and providers.

- Stand up evaluation early: regression tests for prompts, accuracy checks for RAG answers, and red-team tests for injection attempts.

- Build cost observability: central usage metrics, per-app budgets, and rate limiting.

- Decide your “agent boundary”: where agents are allowed to take actions (create tickets, send emails, change configs) and where they must ask for approval.

My takeaway

A $110B raise doesn’t change the fundamentals of enterprise architecture. It amplifies them. Platform change will speed up, competitive pressure will increase, and leaders will be asked to commit to roadmaps with incomplete information.

The organisations that do well won’t be the ones that guessed the right vendor. They’ll be the ones that built a durable AI operating model: clear data tiers, strong identity, reusable retrieval, measurable cost, and a pragmatic way to swap models as the landscape evolves.

If you look at your current AI roadmap, where is it most fragile today: data foundations, governance, vendor dependency, or the ability to measure business impact?

- Anthropic’s DoD stance just changed what “safe” enterprise AI means

- Getting Started with Azure OpenAI

- Getting Started with Containers in Google Cloud Platform