Most agent frameworks treat security as something you bolt on after the fact. NVIDIA just shipped one where security is the architecture.

The Fundamental Problem With Agent Guardrails Today

I’ve reviewed enough enterprise agent designs to spot the pattern. The guardrails live inside the agent process. System prompts tell the model what not to do. Safety layers sit in the same runtime as the code they’re supposed to constrain.

That’s like putting the lock on the inside of the door.

NVIDIA’s OpenShell blog spelled it out clearly — there’s a trilemma with autonomous agents. You can have safety, capability and autonomy, but existing approaches reliably give you only two at a time. If you make the agent safe and autonomous but restrict its tools, it can’t finish the job. If it’s capable and safe but gated on approvals, you’re babysitting it. If it’s capable and autonomous with full access, the guardrails are policing themselves.

That last scenario is the one most enterprise teams are accidentally building toward.

Why Out-of-Process Enforcement Changes Everything

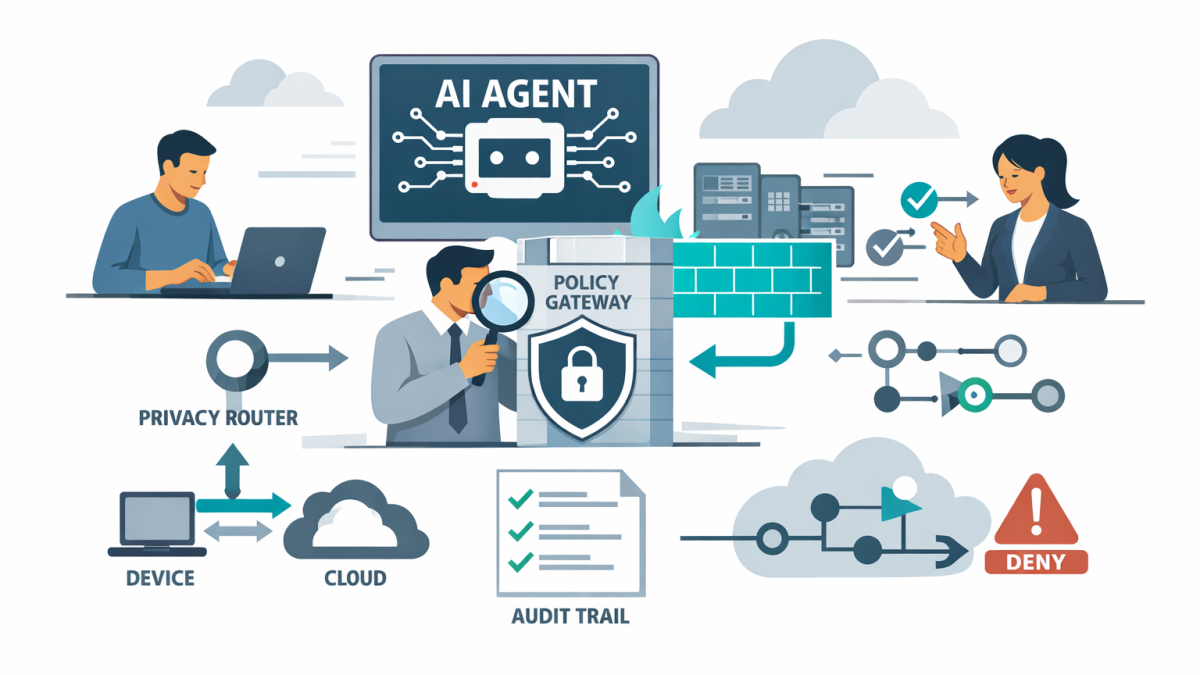

NemoClaw’s core architectural decision is moving policy enforcement outside the agent. OpenShell sits between the agent and the infrastructure. The agent cannot override the constraints because the constraints don’t live in the agent’s process.

This is the browser tab model applied to AI agents. Sessions are isolated. Permissions are verified by the runtime before any action executes. The agent reasons. The runtime governs.

The OpenShell policy engine evaluates every action at the binary, destination, method and path level. An agent can install a verified skill but cannot execute an unreviewed binary. It can request a policy update, but you hold the final approval. There’s a full audit trail of every allow and deny decision.

For anyone who’s spent time in enterprise security, this will feel familiar. It’s the principle of least privilege applied to autonomous software.

Privacy Routing as a Design Primitive

The second piece that matters is the privacy router. In most agent setups I’ve seen, data flows wherever the model endpoint happens to be. If you’re using a cloud-hosted frontier model, your context goes to the cloud. Full stop.

OpenShell’s privacy router makes this a policy decision, not an implementation accident. Sensitive context stays on-device with local Nemotron models. Cloud-based frontier models — Claude, GPT, whatever you’re using — only receive data when your policy explicitly permits it.

This isn’t just a privacy feature. It’s a cost feature. And for Australian organisations working under the Privacy Act and ACSC guidance, it’s a compliance feature.

The Threat Model for Long-Running Agents

Here’s what makes this urgent. Stateless chatbots have a minimal attack surface. An agent with persistent shell access, live credentials, the ability to rewrite its own tooling and six hours of accumulated context running against your internal APIs is a fundamentally different threat model.

Every prompt injection becomes a potential credential leak. Every third-party skill the agent installs is an unreviewed binary with filesystem access. Every subagent it spawns can inherit permissions it was never meant to have.

That’s not hypothetical. That’s the operational reality for any organisation running long-lived coding agents or autonomous workflows today.

What This Means in Practice

NemoClaw is built on Apache 2.0 and works with any coding agent — Claude Code, Codex, Cursor, OpenCode — without code changes. You run one command and the agent operates inside OpenShell’s sandbox with the policy engine and privacy router active.

The deny-by-default posture means nothing runs without explicit permission. Live policy updates happen at sandbox scope. The audit trail captures every decision.

If an agent hits a constraint, it can reason about the roadblock and propose a policy update. But the human holds the final approval. That’s the right balance between autonomy and control.

Security Can’t Be an Afterthought for Agents

The broader lesson from NemoClaw is architectural, not vendor-specific. Any serious enterprise agent deployment needs policy enforcement that lives outside the agent. It needs privacy routing that respects data residency requirements. And it needs an audit trail that security teams can actually review.

Most organisations I work with haven’t built any of this yet. They’re still in the phase where the agent’s system prompt is the only thing standing between their data and an unintended action. NemoClaw makes the alternative concrete. Whether you adopt it or build your own version, the pattern is now clear.

- What NVIDIA NemoClaw Signals About the Future of Enterprise Agent Architecture

- Anthropic’s DoD stance just changed what “safe” enterprise AI means

- What Architects Can Learn From NVIDIA NemoClaw

- Don’t Buy Black-Box Agents and What Your Agentic AI RFP Needs

- NemoClaw and the Rise of Secure Agent Platforms in Enterprise AI