Every enterprise I work with wants autonomous AI agents. Almost none of them have a plan for what happens when those agents do something unexpected. NemoClaw offers a concrete architecture for solving that problem.

The Gap Between Agent Capability and Agent Governance

The conversation in most boardrooms has moved past “should we use AI agents?” to “how do we deploy them at scale?” But the architecture conversation hasn’t kept pace.

Most teams are building agents with powerful reasoning models, tool access and persistent memory. The governance model is usually a system prompt and a prayer. Maybe a content filter on the output.

That worked when agents were stateless chatbots answering questions. It doesn’t work when agents spawn subagents, install packages, maintain shell sessions and run autonomously for hours at a time.

Three Design Patterns From OpenShell

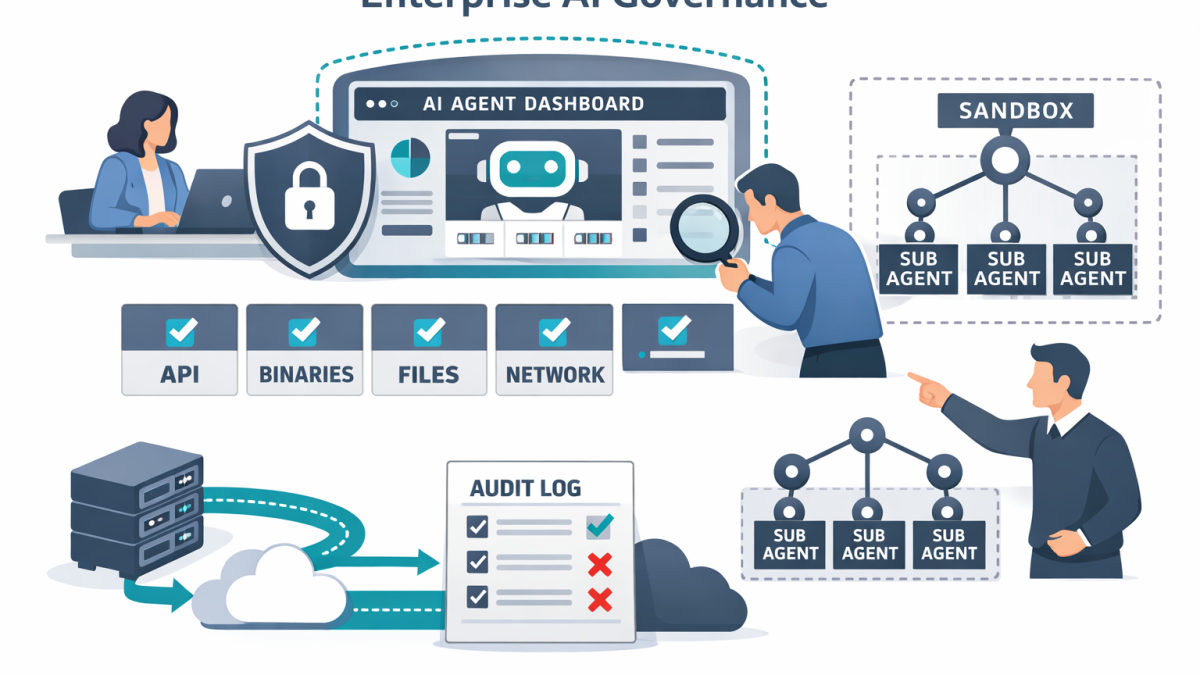

NVIDIA’s OpenShell runtime — the security layer inside NemoClaw — introduces three architectural patterns that every enterprise agent deployment should consider. You don’t have to use OpenShell to learn from it.

Pattern 1: Out-of-process policy enforcement. The policy engine doesn’t live inside the agent. It wraps the agent’s execution environment. The agent can reason about constraints, even propose changes to them. But it cannot override them. This is the fundamental pattern. If your guardrails live in the same process as the thing they’re guarding, they’re not guardrails. They’re suggestions.

Pattern 2: Granular action-level evaluation. OpenShell’s policy engine evaluates every action at the binary, destination, method and path level. An agent can install a verified skill but cannot execute an unreviewed binary. It can read from an approved API but not write to an unapproved one. This is least-privilege access applied to agent behaviour — the same model we’ve used in network security for decades, adapted for autonomous software.

Pattern 3: Privacy-aware model routing. The privacy router decides where inference happens based on policy, not convenience. Sensitive context stays local with Nemotron models running on-device. Frontier models only receive data when the policy permits it. This separates the data-residency decision from the model-capability decision — which is exactly what regulated enterprises need.

The Audit Trail Is the Architecture

One detail that matters more than most teams realise: OpenShell maintains a full audit trail of every allow and deny decision. Every tool execution, every network request, every file access is logged with the policy that governed it.

In my experience, this is where most agent deployments fall apart in practice. Not because the agent fails, but because when it does fail, nobody can explain what happened. The audit trail turns agent behaviour from a black box into a reviewable sequence of governed actions.

For security teams, compliance teams and incident response, this is the difference between “we don’t know what the agent did” and “here’s the complete decision log.”

Applying These Patterns Without NemoClaw

You don’t have to adopt NemoClaw to use these patterns. But you do need to answer the questions they raise.

Where does your policy enforcement layer sit? If it’s in-process, consider extracting it. A sidecar container, a reverse proxy or a wrapper runtime can enforce constraints without modifying the agent code.

How granular is your action-level governance? If your only control is “allow tool use” or “deny tool use,” you’re missing the detail. You need per-tool, per-endpoint, per-path controls.

Where does inference happen for sensitive data? If all context flows to a cloud endpoint by default, you’ve made a data-residency decision without realising it. A privacy router — even a simple one — forces that decision to be explicit.

Do you have an audit trail for agent actions? Not model logs. Not token counts. A decision-level trail that shows what the agent tried to do, what was allowed and what was denied.

The Subagent Problem

One area NemoClaw addresses that most frameworks ignore is subagent governance. When a long-running agent spawns child agents to handle subtasks, those child agents can inherit permissions they were never meant to have.

OpenShell’s sandbox scope means policy updates apply at the session level. A subagent inherits the constraints of its parent sandbox, not the unconstrained default. This is the agent equivalent of the principle of least privilege for child processes.

If you’re building agent systems that delegate tasks, this is a pattern you need to implement. Unscoped subagent permissions are one of the largest unaddressed risks in enterprise AI today.

The Architect’s Responsibility

The broader lesson is that agent governance is an architecture problem, not a model problem. You can’t solve it by choosing a safer model or writing a better system prompt. You solve it by designing the execution environment so that unsafe behaviour is structurally impossible.

NemoClaw and OpenShell are the first production-grade implementation of this principle I’ve seen. Whether your stack ends up NVIDIA-based or not, the patterns are worth studying. The agents are getting more capable every month. The governance architecture needs to keep pace.

- What NVIDIA NemoClaw Signals About the Future of Enterprise Agent Architecture

- NemoClaw and the Rise of Secure Agent Platforms in Enterprise AI

- Getting Started with Azure OpenAI

- OpenAI Agents SDK vs LangGraph in 2026 What CIOs should standardise on

- From Demo to Production with Microsoft Agent Framework for Architects