Microsoft just made its most interesting AI architecture move in months. And it has nothing to do with a new model.

On March 30, 2026, Microsoft announced Critique — a new multi-model deep research system inside Microsoft 365 Copilot Researcher. The core idea is deceptively simple: instead of relying on one AI model to do everything, split the job between two. One model generates the research. A second model tears it apart.

The result? A 13.88% improvement on the DRACO deep research benchmark. That is not a marginal gain. That is a structural improvement in how AI-generated research gets produced.

One Model Writes. Another Model Reviews.

Here is what Critique actually does.

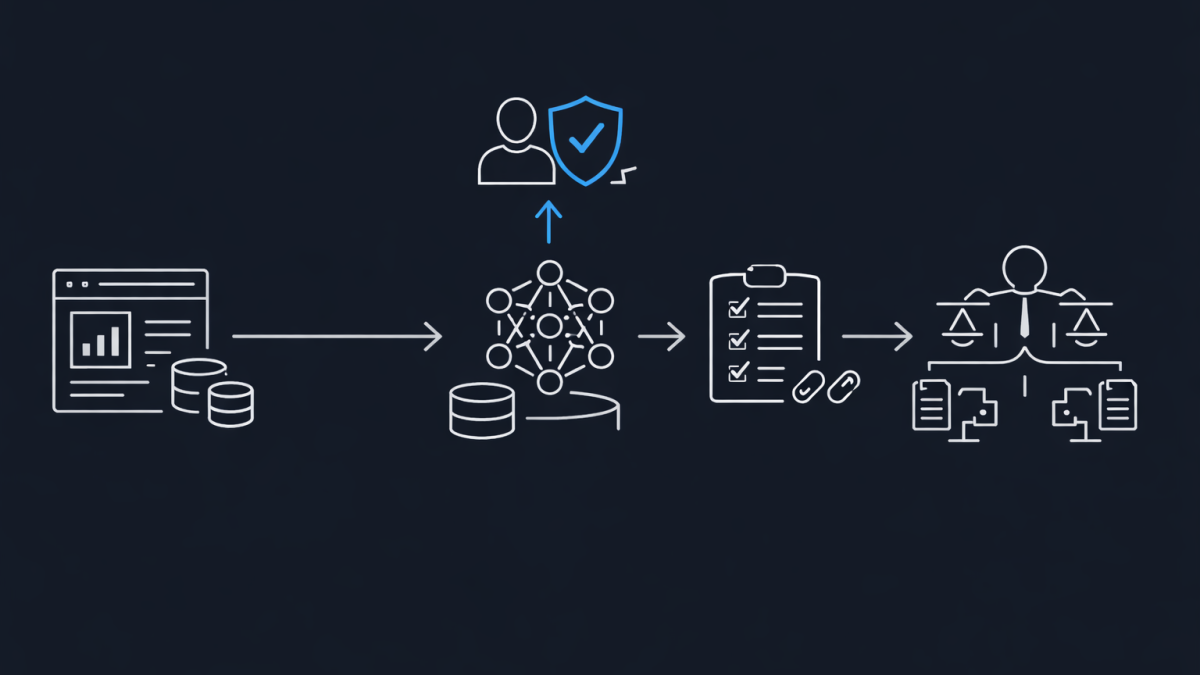

When you submit a complex research query through Copilot Researcher, the first model — currently GPT — handles the heavy lifting. It plans the research task, iterates through retrieval, gathers sources, and produces a structured draft report.

Then a second model — currently Claude from Anthropic — steps in as a reviewer. Not a co-author. A reviewer. It evaluates the draft across specific dimensions: source reliability, report completeness, and evidence grounding. Every key claim needs to be anchored to verifiable sources with precise citations.

This is not two models chatting with each other. It is a rubric-based evaluation loop, modelled after academic and professional peer review. The generator generates. The reviewer reviews. The final output is the result of that tension.

Why This Architecture Matters

For the past two years, the AI industry has been locked in a model arms race. Bigger parameters, higher benchmarks, more training data. But Critique takes a fundamentally different approach. It acknowledges something most vendors will not say out loud: a single model — no matter how large — has blind spots.

By separating generation from evaluation, Microsoft is doing something architects have understood for decades in software design. You do not let the same system that produces output also validate it. That is how you get unchecked errors and hallucinations at scale.

In my experience working with enterprise AI deployments, the biggest complaint from senior leaders is not that AI is too slow. It is that they cannot trust the output. Critique directly addresses that trust gap by building review into the architecture itself.

The Benchmark Numbers

Microsoft evaluated Critique on the DRACO benchmark — 100 complex deep research tasks across 10 domains including medicine, technology, and law. The results were judged using GPT-5.2 as the evaluator, the strictest of the three judge models reported in the benchmark paper.

The numbers speak for themselves:

- Breadth and Depth of Analysis: +3.33 improvement

- Presentation Quality: +3.04 improvement

- Factual Accuracy: +2.58 improvement

- Overall: +7.0 points aggregate, all dimensions statistically significant (p < 0.0001)

That last point is important. These are not cherry-picked results. Across five independent runs per question, averaged over the full dataset, Critique consistently outperformed the single-model approach. In 8 out of 10 domains, improvements were statistically significant.

Council: Side-by-Side Model Comparison

Alongside Critique, Microsoft also introduced Council — a different multi-model approach. Instead of one model reviewing another, Council runs both an Anthropic model and an OpenAI model simultaneously on the same research query. Each produces a complete, standalone report.

A dedicated judge model then evaluates both reports and produces a distilled summary. It highlights where the models agree, where they diverge, and what unique contributions each one brings.

This is particularly interesting for high-stakes research where you want to see how different AI systems frame the same problem. If both models arrive at the same conclusion through different reasoning paths, your confidence goes up. If they diverge significantly, you know exactly where to dig deeper.

What This Means for Enterprise AI Strategy

This move signals something bigger than a product feature. It signals the end of the single-model era for serious enterprise work.

If Microsoft — OpenAI’s largest investor — is routing research tasks through Claude for validation, the message is clear. No single model vendor has a monopoly on quality. The future of enterprise AI is multi-model by design, not by accident.

For IT leaders and architects evaluating AI platforms, this changes the calculus. The question is no longer “which model should we standardise on?” It is “how do we build workflows that use the right model for the right task?”

A few things I would be watching closely:

Model governance becomes critical. When you have multiple models in a single workflow, you need clear policies on data routing, compliance boundaries, and which models touch which data. This is especially relevant for Australian organisations operating under privacy legislation and Essential 8 requirements.

Cost structures will shift. Running two frontier models on every research query is not cheap. Expect Microsoft to build tiering into this — simpler queries get single-model treatment, complex queries get the full Critique pipeline.

The reviewer role will expand. Right now, Critique is bidirectional in concept — Microsoft has said they expect GPT and Claude to swap roles in the future. That suggests a future where the reviewer model is selected dynamically based on the task domain.

My Take

Critique is one of the most architecturally honest moves I have seen from a major AI vendor this year. Instead of pretending one model can do everything, Microsoft has built a system that acknowledges the limits of any single model and compensates with structural tension.

The peer review analogy is not accidental. We figured out centuries ago that the best way to improve research quality is to have someone else check the work. It is remarkable that it took the AI industry this long to build that principle into the architecture itself.

The real test will be what happens when this multi-model approach moves beyond research reports and into operational workflows — code review, security analysis, compliance checking. That is where the architecture pattern behind Critique becomes genuinely transformative.

For now, Critique is available to Microsoft 365 Copilot customers in the Frontier program. If you have access, I would recommend testing it on your most complex research tasks and comparing the output quality against single-model results. The difference should be immediately obvious.

- OpenAI Launched a $100/Month ChatGPT Pro Plan. Here’s Exactly What You Get for the Money

- A Practical Checklist for Evaluating Gemini 3.1 Flash Lite vs GPT Claude

- Microsoft Is Building Its Own OpenClaw for Enterprise. Here’s Why That Changes the Agent Landscape

- From Demo to Production with Microsoft Agent Framework for Architects

- Microsoft Agent Framework Foundry MCP and Aspire in Practice