For the last three years, every major AI model has been a generalist. Train on the internet, cover every domain from poetry to protein folding, then let enterprises fine-tune at the edges. OpenAI just broke that pattern.

GPT-Rosalind is not a general-purpose frontier model with a life sciences skin. It is a purpose-built research model for pharmaceutical discovery, molecular biology, and clinical workflows — named, pointedly, after Rosalind Franklin. That naming choice tells you exactly what OpenAI thinks this model is going to do to pharma AI.

Why a Specialised Model Is a Bigger Deal Than People Realise

The conventional wisdom in enterprise AI for the last 18 months has been simple. Buy the biggest frontier model you can, wrap it in RAG, feed it your domain knowledge, and you get 90% of a specialist system for 10% of the effort.

That has worked surprisingly well in legal, finance, and customer service. It has not worked in life sciences.

Pharma is the domain where general-purpose models have struggled the hardest. Hallucinated protein structures. Confident but wrong molecular interactions. Clinical trial reasoning that sounds credible but falls apart when a scientist with a PhD actually checks the output. The cost of a wrong answer in drug discovery is not embarrassment — it is millions of dollars of wasted wet lab work, or worse, a candidate that fails in Phase 2.

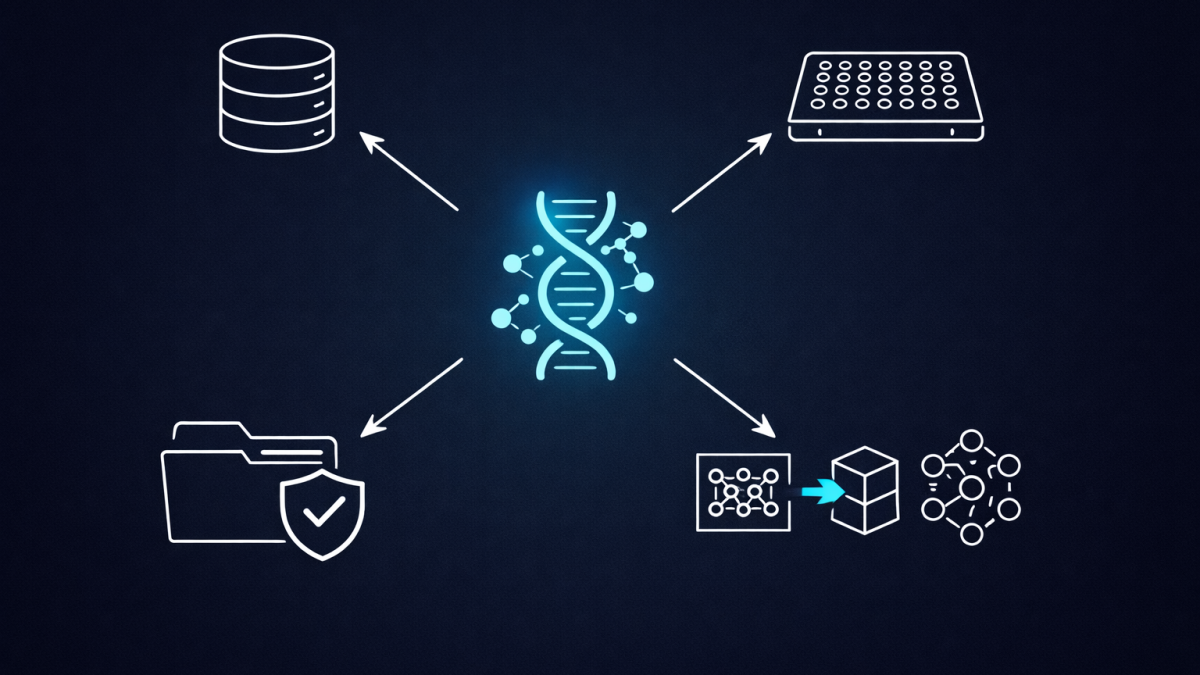

A model trained from the ground up on protein structures, assay data, clinical trial records, and molecular simulation outputs changes that calculus. It is not smarter in the general sense. It is correct more often in the narrow sense that actually matters.

What Changes for Pharma AI Teams

I have spent enough time with AI leaders in health and life sciences to know what this announcement will do to their roadmaps this week.

Most pharma AI programs right now are built on one of three foundations — OpenAI GPT-5.x, Anthropic Claude Opus, or a self-hosted open model like Llama or Mistral fine-tuned on internal data. Every one of those teams is about to have the same meeting: do we rebuild on GPT-Rosalind?

The honest answer is usually yes, eventually. When a model is purpose-built for your domain by the lab that invented the category, the gap in accuracy and reasoning quality is not marginal. It compounds. Every downstream system — literature review agents, molecule design copilots, trial protocol reviewers — benefits from a stronger base layer.

But the migration is not trivial. Prompt libraries, evaluation suites, safety guardrails, and integration points all get rewritten. I would expect a serious pharma AI team to spend three to six months validating GPT-Rosalind against their existing stack before they commit.

The Signal This Sends to Other Verticals

This is the part most people will miss.

OpenAI has now set a precedent: verticalised frontier models for domains where general-purpose reasoning is not good enough. If GPT-Rosalind works commercially, expect the same pattern in financial services, legal, defence, and possibly energy within 18 months.

That changes the strategic question every CIO and head of AI should be asking right now. The question is no longer “which frontier model do we standardise on”. It is “which of our domains will get a vertical model first, and are we ready to migrate when it arrives”.

Teams that have over-invested in model-agnostic abstraction layers are going to be glad they did. Teams that hard-wired everything to a specific model API are about to feel the pain.

What I Would Actually Do With This Today

If I were advising a life sciences organisation this week, I would do three things.

First, get GPT-Rosalind into an evaluation environment as soon as OpenAI opens access. Do not wait for a formal procurement cycle. The teams that test specialised models early get a compounding advantage on prompt engineering and pipeline design.

Second, revisit the evaluation suite. General-purpose benchmarks like MMLU or SWE-bench tell you almost nothing about whether a model can reason correctly about a novel kinase inhibitor. Build domain-specific evals, or borrow from the published benchmarks the model’s own release notes cite. The team with the best evals always wins in enterprise AI — they just know first when something actually works.

Third, separate the model layer from the agent layer architecturally. Whatever you build on GPT-Rosalind should be able to swap to a successor model, or to an Anthropic or Google equivalent when those arrive. Vertical models are going to proliferate. Lock-in risk is about to get worse, not better.

The Part I Am Still Thinking About

There is a version of this story where specialised models fragment the enterprise AI market in a way that hurts everyone. Every vendor ships a vertical model. Every enterprise ends up running five different foundation models with five different safety profiles, five different prompt conventions, and five different audit trails. Governance gets harder, not easier.

There is another version where specialisation drives real scientific breakthroughs, pharma gets faster and cheaper, and the economics of drug discovery finally tilt back toward smaller labs and academic researchers. That would be a genuinely good outcome.

I do not know which version we get. What I do know is that the era of “one frontier model rules them all” ended the moment OpenAI named a model after Rosalind Franklin and pointed it at pharmaceutical research. The shape of enterprise AI just changed, and life sciences is the first domain to feel it.

Every other regulated industry should be watching very closely.

- Microsoft Critique Runs Two AI Models Against Each Other for Better Research. My First Look

- 15 Billion Tokens Per Minute. OpenAI’s Infrastructure Strategy Just Made Every Competitor’s Moat Look Shallow

- OpenAI’s New Prompt Injection Defences Are the Most Important AI Security Work This Year

- OpenAI Called It a “Superapp.” I Read the Investor Letter and Saw an Agent-First Operating System

- OpenAI’s $110B Raise and What It Changes in Enterprise AI Roadmaps