When Anthropic released Claude Opus 4.7 on April 16, Cursor’s CEO Michael Truell didn’t bury the headline. His quote on Anthropic’s announcement page was direct: “On CursorBench, Opus 4.7 is a meaningful jump in capabilities, clearing 70% versus Opus 4.6 at 58%.”

That’s a 12-percentage-point lift on a benchmark specifically designed to resist the inflation that plagues public evals. And it matters far more than the number suggests.

Why CursorBench Is Different

Most public coding benchmarks are losing their usefulness at the frontier. SWE-bench Verified draws tasks from public repos that end up in training data. OpenAI stopped reporting on it entirely after finding that frontier models could reproduce gold patches from memory and that nearly 60% of unsolved problems had flawed tests.

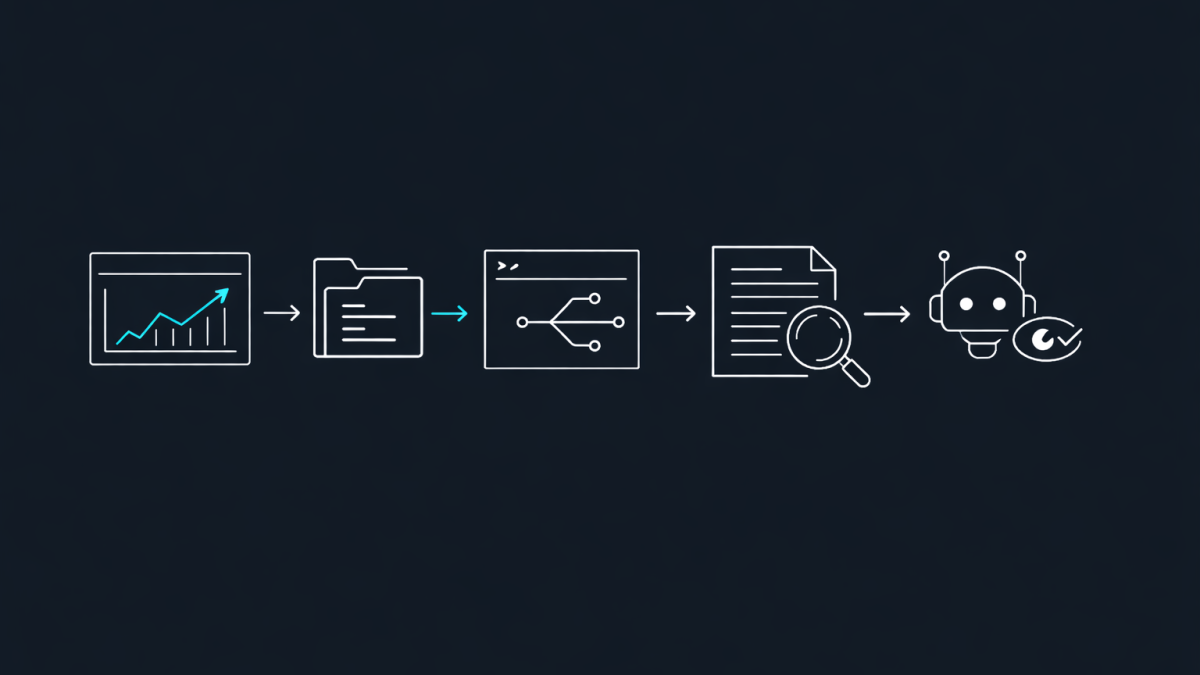

CursorBench takes a different approach. Tasks are sourced from real Cursor sessions using Cursor Blame, which traces committed code back to the agent request that produced it. Many tasks come from Cursor’s internal codebase and controlled sources, reducing contamination risk. The task descriptions are intentionally short and underspecified — matching how developers actually talk to agents, not the detailed GitHub issues that public benchmarks use.

The latest version, CursorBench-3, has roughly doubled in problem scope from the original. Tasks now involve multi-workspace environments with monorepos, production log investigations, and long-running experiments. This isn’t bug-fixing in a single file. It’s the kind of work that separates useful agents from demo-ware.

What the 58% to 70% Jump Actually Means

At frontier levels, public benchmarks are increasingly saturated. Models like Haiku can match or exceed GPT-5 on some of them. CursorBench still produces meaningful separation between models that developers experience as genuinely different.

So when Opus 4.7 clears 70% on a benchmark where Opus 4.6 sat at 58%, that’s not a marginal improvement on an easy test. It’s a substantial gain on tasks that are longer, harder, and more representative of real coding work than anything on the public leaderboards.

The testimonials from other companies reinforce this. Cursor’s own Composer 2, their in-house model, scored 73.7% on Terminal-Bench 2.0 compared to earlier versions at 44.2% and 38.0%. But Opus 4.7’s jump on CursorBench — a benchmark designed to be harder and more realistic — is a different kind of signal.

The Practical Improvements That Matter

Having used Opus 4.6 extensively, the areas Anthropic highlights for 4.7 align with the friction points I’ve experienced in real agentic workflows.

Instruction following. Opus 4.7 takes instructions more literally. Anthropic notes this can actually break prompts written for earlier models that interpreted instructions loosely. That’s the kind of improvement that tells you something real changed in the model’s attention to detail.

Long-running autonomy. Devin’s CEO Scott Wu said Opus 4.7 “works coherently for hours, pushes through hard problems rather than giving up, and unlocks a class of deep investigation work we couldn’t reliably run before.” That matches what I see as the real bottleneck in agentic coding — not initial capability, but sustained reliability over complex multi-step tasks.

Self-verification. Vercel’s Joe Haddad reported that Opus 4.7 “does proofs on systems code before starting work” — behaviour not seen in earlier Claude models. A model that verifies its own logic before executing is a model you can trust with less supervision.

Vision improvements. Opus 4.7 accepts images up to 2,576 pixels on the long edge, more than three times prior Claude models. For anyone doing computer-use agent work or extracting data from complex diagrams, that’s a significant capability unlock.

What This Means for How We Work

The shift from Opus 4.6 to 4.7 is happening in a broader context. Cursor’s own research, published just days before this release, found that better AI models lead to greater AI demand — a Jevons-like effect where efficiency gains increase total consumption rather than reducing it. Developers at 500 companies using Cursor increased their AI usage by 44% over eight months spanning the releases of Opus 4.5 and GPT-5.2.

More importantly, after a 4-6 week lag, developers shifted to more complex tasks. High-complexity messages grew 68% compared to 22% for low-complexity ones. The biggest growth was in documentation (+62%), architecture (+52%), and code review (+51%).

Opus 4.7 is positioned to accelerate that shift further. When a model can reliably handle multi-file, multi-step coding tasks at 70% accuracy on a realistic benchmark, the work you hand off changes fundamentally.

The Benchmark Arms Race Is Over — Real-World Evals Won

The most important thing about the CursorBench number isn’t the score itself. It’s that we’re finally seeing the industry move toward evaluations that match how developers actually use AI agents.

Public benchmarks served their purpose in the early days. But when models can memorise gold patches and Haiku can match GPT-5 on saturated tests, those numbers stop meaning anything. CursorBench, built from real sessions with intentionally underspecified tasks and agentic graders, is closer to the truth.

Opus 4.7 scoring 70% on that benchmark tells me more about its real-world utility than any number on SWE-bench ever could. And if you’re making decisions about which models to trust with your hardest coding work, that’s the number worth paying attention to.